👋 Hi I'm Flawnson

I'm a former ML engineer turned full-stack developer. I used to work a lot with Graph Neural Networks and NLP models.

I believe in function over form, that done is better than perfect, and that data outweighs intuition.

I'm an avid 🎹 player, cautious 🏍️ rider, casual 🏸 enjoyer, and an abysmal 🥏 tosser.

Given free time, I pander to and moderate the (small but growing) Geometric Deep Learning subreddit.

👨💻 What I'm working on

I build products that make the world a better place.

Most recently I've been working with my friend and co-founder Albert Wang on Comend. We build tools for the rare disease community to help them become research ready.

I drink a lot of 🍵 and enjoy the culture. I'm building Teatico, an encyclopedia of tea products with my girlfriend Ramy Zhang.

I release original piano compositions on YouTube and Spotify.

📚 What I've worked on

Comend - Co-founder and CTO

Feb 2023 - Present - Toronto, Canada

- Built and launched our core platform, helping patient groups organize, share, and find partners for their research.

- Built and launched Librarey, a search platform to help rare families find resources.

- Grew the team to 4 and onboarded over 50 patient groups with over 140 research plans (and counting).

- Lead engineering efforts across all products, coordinated workloads, managed tickets, built E2E and unit testing suites, organized monorepo and project architecture, monitored deployments and containers.

- Raised over $700K in pre-seed funding to date from Character Labs, Drive Capital, and PharmStars.

Entrepreneur First (EF) - Founder in Residence

March 2022 - Feb 2023 - Toronto, Canada

- Went through the formal process of finding strong co-founder fit and identifying problems worth solving and having useful customer conversations.

- Iterated on many ideas through many rounds of product validation with several potential cofounders.

- Got very comfortable with sales tools like LinkedIn Sales Navigator, Apollo.io, Klenty, and Reply.io.

Kebotix Inc. - Machine Learning Developer Intern

Sept 2020 - May 2021 - Boston, MA

- Developed a generative Transformer model optimized with a genetic algorithm for discovering organic and inorganic molecules of interest.

- Contributing author of "Molecular discovery with conditional generative transformers coupled with genetic algorithms" (unpublished).

Relation Therapeutics - Machine Learning Research Intern

Sept 2019 - May 2020 - London, UK

- Developed novel GNN models for predicting the druggability and classifying the types of proteins.

- Built tuning and testing pipeline to optimize model parameters and run configurations.

- Contributing author of "Sparse Dynamic Distribution Decomposition: Efficient Integration of Trajectory and Snapshot Time Series Data".

ML for Chem/Bio-informatics - Personal Projects

Oct 2018 - June 2019 - Toronto, Canada

- Built a series of pipelines for preprocessing several chemical and biological data assays.

- Built various predictive, multivariate, and generative, ML models to train on processed data.

- Wrote a series of articles on the various methods of Geometric Deep Learning and Graph Learning.

Canadian Imperial Bank of Commerce (CIBC) - Summer Intern

June 2018 - Sept 2018 - Toronto, Canada

- Summer intern at CIBC Live Labs as front end developer and designer for an AR app.

- Designed basic UI/UX for app, collaborated with backend developers throughout development.

- Performed user/guerrilla testing of prototype and aggregated feedback for improvements.

📜 Publications

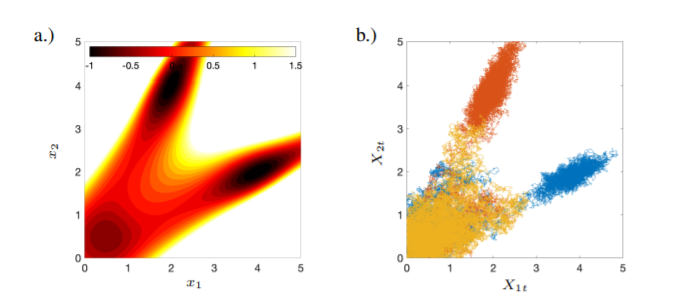

Sparse Dynamic Distribution Decomposition: Efficient Integration of Trajectory and Snapshot Time Series Data

Jake P. Taylor-King, Cristian Regep, Jyothish Soman, Flawnson Tong, Catalina Cangea, Charlie Roberts

DDD allows for the fitting of continuous-time Markov chains over these basis functions and as a result continuously maps between distributions. The number of parameters in DDD scales by the square of the number of basis functions; we reformulate the problem and restrict the method to compact basis functions which leads to the inference of sparse matrices only -- hence reducing the number of parameters.

❓ Q&A

What do you build with?

TypeScript, Python, SQL databases, Tailwind. Container-first.

What’s your setup?

JetBrains IDEs with vim bindings for web development. Codex occassionally. Terminal-heavy workflows when I used to train models.

What hardware are you on?

At home, I work on my custom-built rig (AMD Ryzen 9 9950X, RTX 5090). At work, I use a 2020 MacBook Pro M1.

What’s your dev style?

Fast iteration. Idea → deployed quickly. Prefer shipping > overengineering.

How do you decide what to build?

Talk to users, look at data, rationalize how much positive impact I'd be making.

Favorite AI tools?

I'm model agnostic and default to my locally-run SOTA models (currently Qwen3-Coder 30B) and switch between GPT, Gemini, and Claude situationally.

How do you use AI?

Well-scoped small context window one-shottable tasks, or exploring multiple ideas/avenues in parallel.

What do you suck at?

Writing and updating tickets, working on something I don't believe in, stepping away from a problem.

What's your cat's name?

Paloma is a tiny 4.5-pound Siamese 🐱 we adopted from a rescue.

What bike do you have?

A 2023 Yamaha MT-03.